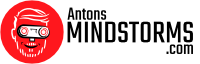

I’ve always been fascinated by the behavior of flocks and herds when many animals move with seeming coordination. I’ve been lucky to have had the chance to cooperate with Laurens Valk on robot simulation of such behavior.

In the video you can see the project starting to come together. Here’s a list of problems we had to tackle.

1. Indoor precision Positioning of EV3 robots

For the robots to be able to avoid each other and cooperate it is important that they know where the other robots are in relation to them. For this we used the triangular markers you see on the backs of the robots. They are spotted by a webcam above the field. The markers are all unique and show an ID, position and orientation to the camera. Converting dimensions in camera pixels to real world relative positions proved to be some interesting linear algebra. I will go into that in a full separate post.

2. Wireless Communication between EV3 robots

The next challenge was to actually share positions with all the robots over some wireless networking system. The most important feature of the communication was that it had to be real time. The most reliable solution that I could find was a direct UDP socket connection over wifi. In another separate post I will go into this communication challenge too, and how to implement in python.

[python]

data = gzip.compress(pickle.dumps(robot_data[robot_id]))

try:

result = self.server_socket.sendto(data,('255.255.255.255',port))

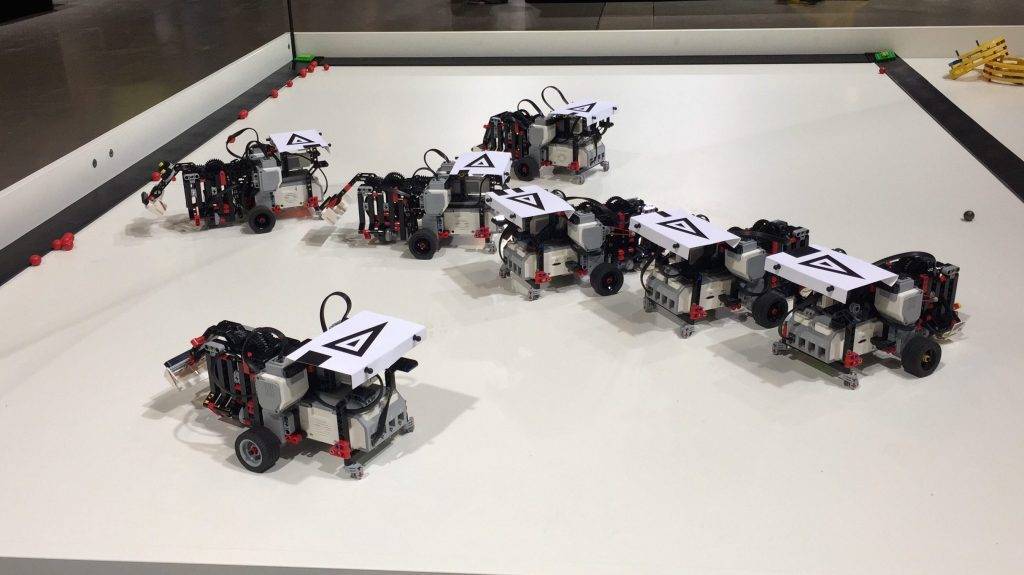

[/python] 3. Movement and pathfinding for MINDSTORMS robots

Now that each robot knows where it is, it has to decide where to go. The best method here proved to be a set virtual springs. So some springs push the robots away from each other, other springs push the robots away from the walls and yet other springs attract robots to the balls on the table. All the virtual spring forces can be calculated from the relative position of the robot to other robots and to the walls and balls. Mathematically you can then combine all spring forces into one vector. That’s where we want the robot to move. That would be easy with rotocasters or omniwheels. They can start in any direction, at any time. But we wanted to limit ourselves to one basic Mindstorms set. So on to the next challenge.

3. MINDSTORMS EV3 collision avoidance

The solution for movement was attaching the virtual springs to a point in front of the driving wheels. Imagine you are pulling a sulky horse cart. At any time you can step forward, backward or sideward. Now imagine a swiveling shopping cart wheel in the position you were standing, and yourself sitting where cart driver normally sits. By turning the wheels with your hand, you can pull the swiveling wheel in any direction at any time! So this is how we attached the virtual springs to a two-wheeled robot base. We calculate the needed movement of the swivel wheels by combining all the spring forces there and then just turn the wheels accordingly.

4. Picking up Bionicle balls with a MINDSTORMS robot

Picking up balls was another challenge that was more difficult than expected. We wanted to limit ourselves to one motor for both picking up and releasing the balls at will, while also having some storage inside the robot. The picking also needed to be able to cope with some positioning imprecision. So it needed to pick up balls that could be off by centimeters from the feeding point. I will also dedicate a post on all the failed prototypes here.

5. Different states for collecting, dumping and recharging

The last challenge was to be able to show different behaviours in the robot. Driving to the depot with a full belly, seeking balls while avoiding others, signalling an empty battery,… for this robot program has a ‘state machine’. It executes it’s behaviour seven times per second, but switches to a different behaviour when the situation arises. For instance, after picking up five balls it switches to ‘drive to depot’-mode for releasing the balls. In every mode different forces, target and spring calculations apply.

Which challenge should I detail first? Let me know on facebook, youtube or google plus!

Hello can I get the code of the detection balls , field and robot.